The ECVP is an annual international conference that aims to provide a forum for the presentation and discussion of new developments in the scientific study of visual perception. Empirical, theoretical, and applied perspectives from the disciplines of psychology, neuroscience, and cognitive science are all welcome and encouraged. Since 1978, ECVP has been one of the largest international conferences in the field, attracting researchers from all over the world.

General Information

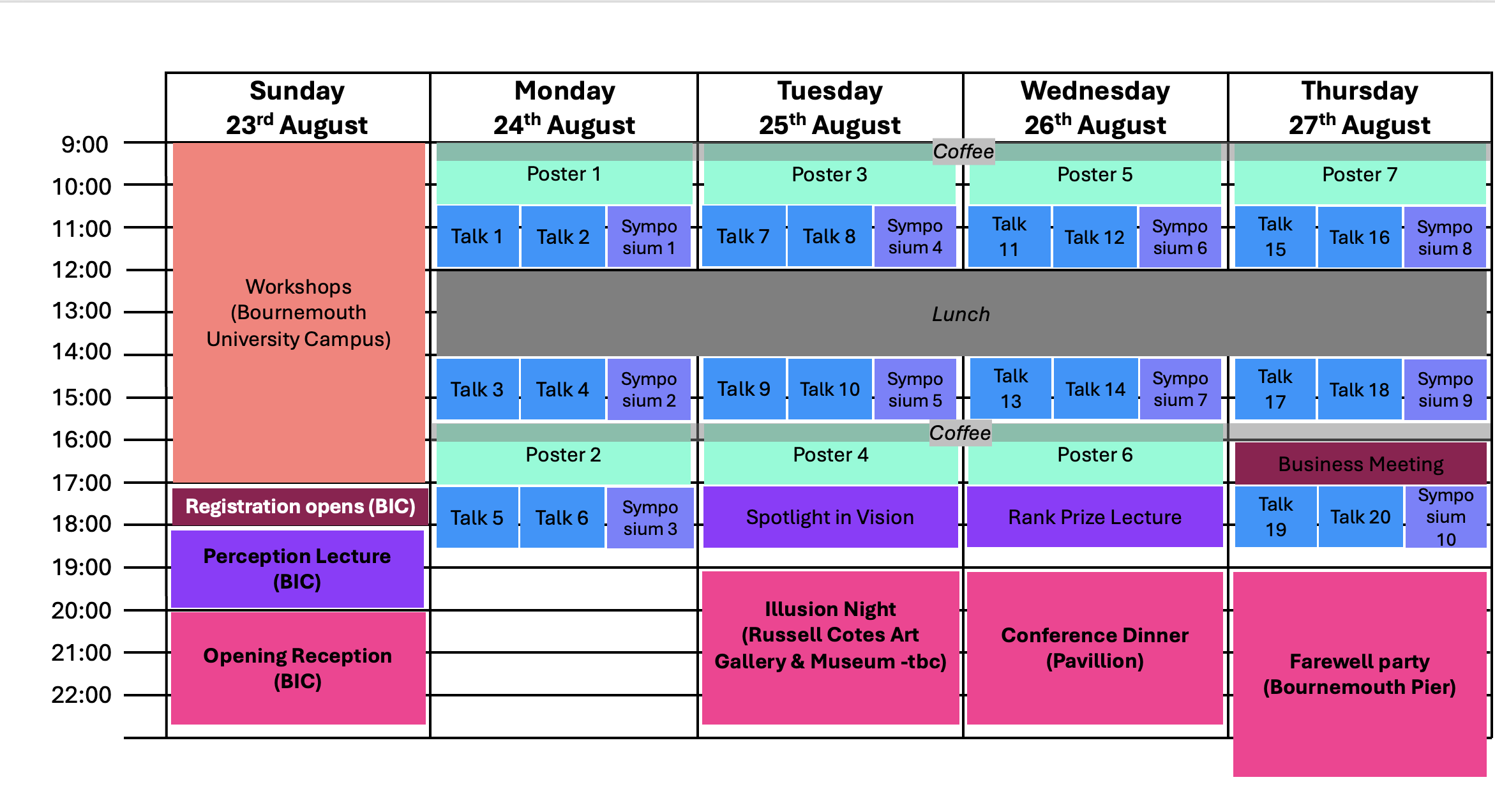

ECVP 2026 starts on Sunday, August 23th with a series of hands-on workshops and ends on Thursday, August 27th with the Farewell Party.

More information …

On Sunday, August 23rd, ECVP 2026 in Bournemouth will kick off with a series of workshops and that will take place on the University Campus in the morning and early afternoon. On Sunday evening, the Perception Keynote Lecture will be held by Karl Gegenfurtner (Justus Liebig University of Giessen) at the Bournemouth International Centre, followed by the Opening Reception.

From August 23th to August 27th, all symposia, talk sessions, poster sessions and keynote lectures will take place at the Bournemouth International Centre, in the city centre, by the sea.

On Tuesday afternoon (August 25th), we will continue the Spotlight Lecture series, which showcases recent innovative and influential findings or methods in vision science. In 2026 the Spotlight in Vision Lecture will be given by Nadine Dijkstra (University College London). In the evening, the popular Illusion Night will take place (location tbc).

On Wednesday (August 26th), the traditional Rank Prize Lecture (tbc), this year given by Monica Gori (Istituto Italiano di Tecnologia), will take place in the afternoon, and the Conference Dinner will be held in the evening at the Pavillion.

Finally, the conference will conclude on Thursday (August 27th) with talks and poster sessions running into the late afternoon. In the evening we will celebrate the end of the conference with the Farewell Party at the Bournemouth Pier, five minutes walk from the Bournemouth International Centre, litterally on the sea.

Bournemouth Interational Centre

The main conference venue is the Bournemouth International Centre . It offers several conference rooms, some of which faceing the sea.

Russell Cotes Art Gallery & Museum

We plan to host the Illusion & Demo Night at the Russell Cotes Art Gallery & Museum . It is a historic Victorian seaside villa in Bournemouth filled with global art and collections, originally built as a private home and later donated to the public.

Program

Overview of our preliminary* conference schedule:

*Note: The days of the events are fixed, time slots may still change slightly.

More information and download of program …

The detailed online program will be available soon!

Conference venues

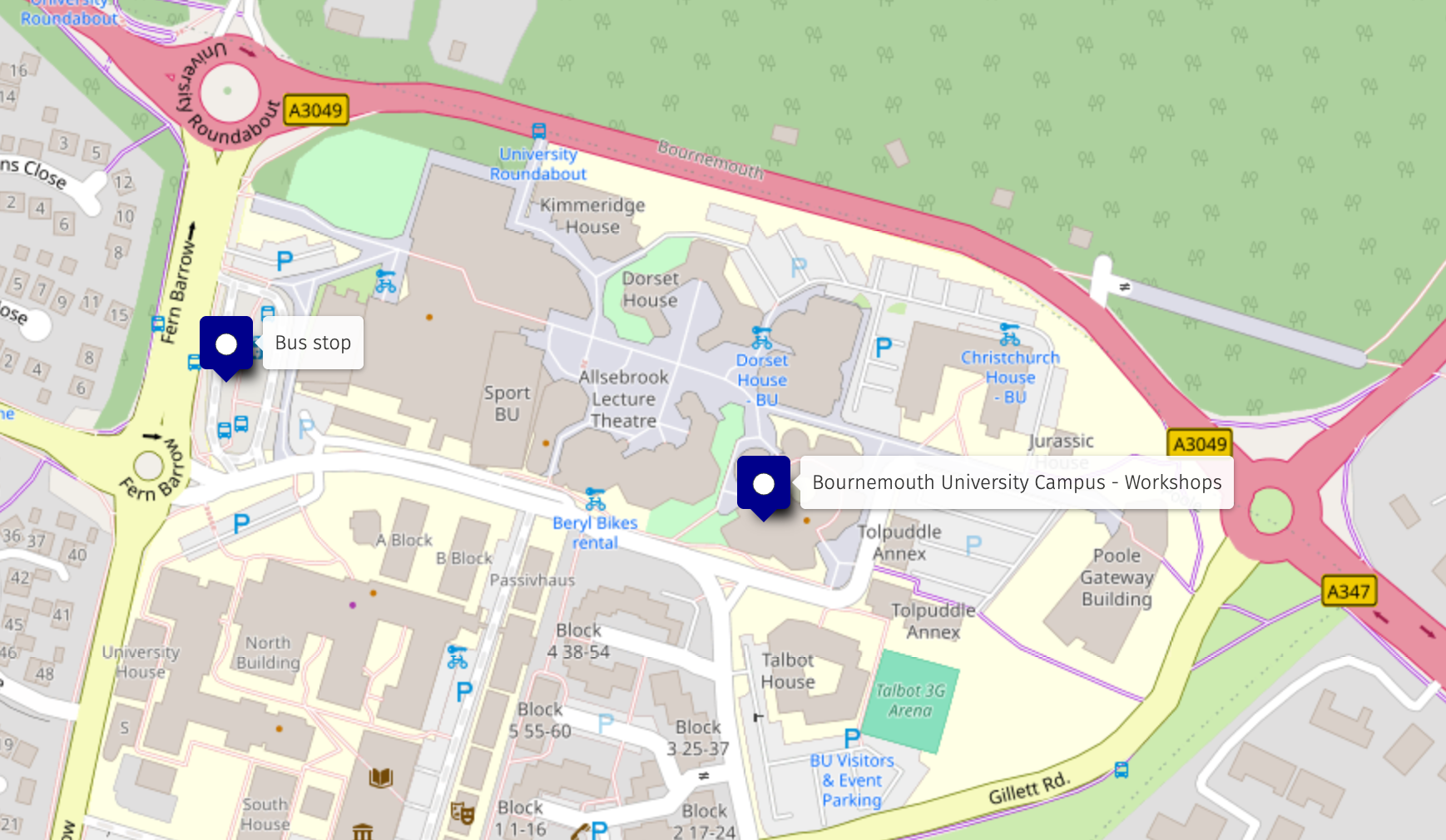

With a click on the images below you will be redirected to a map of all conference venues on OpenStreetMap.

Main conference venues by Bournemouth Beach

- Poster sessions, talks and keynote talks and Welcome reception & coffee breaks @ Bournemouth International Centre

- Illusion & Demo Night @ TBD

- Conference Dinner (Aug 26th) @ Bournemouth Pavillion

- Farewell Party (Aug 27th) @ Key West at the Bournemouth Pier

Bournemouth University Talbot Campus for workshops.

Keynotes

We are thrilled to have secured three exceptional scientists as keynote speakers for ECVP 2026:

Perception Lecture

Karl Gegenfurtner

Justus Liebig University of Giessen

Aug 23rd @ Bournemouth International Centre (room tbc) | 18:00

Title: - Color Vision — Then and Now

Sponsored by: SAGE – Perception / i-Perception

Spotlight in Vision Lecture

Nadine Dijkstra

University College London

Aug 25th | time tbd

Title: Distinguishing imagination from reality in a generative brain

Rank Prize Lecture

Monica Gori

Instituto Italiano di Tecnologia

.

Aug 26th | time tbd

Title: The Early Role of Vision in Multisensory Development

Sponsored by: Rank Prize

Perception Lecture – Karl Gegenfurtner - Color Vision — Then and Now –

Abstract:

Many of the central questions in color vision have been with us for more than a century: How precisely can we discriminate colors? How stable are surface colors under changing illumination? And how should brightness and luminance be related to perception? This long tradition has produced a remarkably solid framework of theories, measurements, and standards. Yet its very success can also create the impression that the central questions have largely been settled. In this lecture I will argue that several classical problems in color vision remain surprisingly open. My current work revisits these questions using a new generation of experimental tools. Virtual reality allows color constancy to be studied in photorealistic scenes under nearly natural viewing conditions while maintaining full experimental control. Deep neural networks provide end-to-end models of vision that link color processing directly to tasks such as object recognition. Robust ranking paradigms enable large-scale online experiments to evaluate photometric standards and heterochromatic brightness. Finally, gamified tasks make it possible to measure color discrimination at unprecedented scale, helping to overcome the curse of dimensionality in color space. It is an exciting time in vision research, when new techniques allow us to find new answers to very old questions.

Spotlight in Vision Lecture – Nadine Dijkstra - Distinguishing imagination from reality in a generative brain –

Abstract:

Our perception of reality is generative: to resolve ambiguous sensory input, our brain predicts the most likely interpretation using an internal model built on past experiences. This same mechanism allows us to generate sensory signals in the absence of input, giving rise to mental imagery. Since perception and imagery rely on the same generative mechanism, how do we know at any given moment that what we are experiencing is real and not just imagined? I will present studies that aim to answer this question using psychophysics, neuroimaging and computational modelling. The results suggest that higher-order brain regions monitor the precision of sensory signals to infer whether they reflect reality or imagination. I end by discussing a novel research direction in which we will explore the interaction between reality inferences and our internal model of the world.

Rank Prize Lecture – Monica Gori -The Early Role of Vision in Multisensory Development –

Abstract:

Developmental processing relies heavily on vision, which serves as a key organizer for integrating information from other senses. Vision indeed helps to shape how auditory and tactile signals are interpreted, supporting the development of coherent spatial and body representations. When visual input is missing early in life, as in blind infants and children, these representations are often altered, leading to difficulties in multisensory integration. Understanding how vision guides early sensory interaction reveals its foundational role in building a unified perception of the world and informs the design of accessible technologies that translate visual information into sound, vibration, or movement.

Symposia

Our symposia offer a diverse selection of research topics, featuring speakers from various career stages, research backgrounds, and global locations to encourage lively discussion and exchange of ideas. A symposium should provide a diverse overview of a research area of interest to the ECVP audience. Organizers should strive to include speakers from different career stages, research groups, and geographic locations who represent a broad range of views and ideas under the overarching theme of the symposium. Presentations should be related to each other and stimulate insightful discussion. The symposium schedule will be announced near the conference.

Please find more information about our submission guidelines on the Submissions page.

Symposium submissions are complete. For a detailed list of all symposia, including the preliminary schedule of speakers, please expand the item below.

LIST OF SYMPOSIA:

1) The rhythmic nature of perception and attention: Evidence, challenges, and open questionsOrganiser: Maëlan Q. Menétrey

- Xiaoyi Lium, Michael Arcaro, & Sabine Kastner: Dynamic modulation of endogenous attentional sampling under cue uncertainty

- Maëlan Q. Menétrey, Paola Biocchi, & David Pascucci: Testing oscillatory modulations in temporal summary statistics across visual features

- Laurie Galas, Mehdi Senoussi, Niko Busch, Ian Donovan, Sanne ten Oever, & Laura Dugué: Oscillatory dynamics of attention in sensory tuning and perceptual processing

- Domenica Veniero, Francesca Nannetti, & Gregor Thut: Effects of neural oscillations on visual performance during attentional deployment

- Clare Press, Aaron Kaltenmaier, Quirin Gehmacher, & Matthew H. Davis, Peter Kok: Integrating fixed and flexible rhythms

2) Beyond local features: Spatiotemporal structure in perception and neural processing

Organisers: David Pascucci & Michael H. Herzog

- David Pascucci & Árni Kristjánsson: Seeing the trees through the forest: Spatiotemporal routines in visual cognition

- Steven Scholte: Binding for whom? From features and observers to action in a structured world

- Michael H. Herzog: A half second of spatiotemporal, contextual processing precedes conscious perception

- Lucia Melloni, Simon Henin, & Charan Ranganath: From local features to event structure: Surprise, prediction, and the dynamics of contextual vision

- Laura Dugué, Yue Kong, Kirsten Petras, David M. Alexander: Traveling Waves as spatiotemporal neural mechanism for perception and attention

3) What vision scientists can learn from continuous movement tracking during decision making

Organiser: Elahe’ Yargholi

- Tijl Grootswagers, Genevieve L. Quek, & Manuel Varlet: Movement trajectories reveal the connection between emerging neural representations and dynamic decisions

- Timothée Maniquet, Huangxu Fang, N. Apurva Ratan Murty, & Hans Op de Beeck: Predicting categorisation motor movements from cortical category selectivity

- Elahe’ Yargholi, Timothée Maniquet, Joost Vennekens, Jan Van den Stock, & Hans Op de Beeck : Continuous movement tracking during decision making regarding visual scenes reveals the competition between scene and people valence

- Maximilian P. Wolkersdorfer, & Omar F. Jubran: Introduction to Spatiotemporal Survival Analysis: New insights from distribution analyses of movement trajectories

- Omar F. Jubran, Jia Li, Maximilian P. Wolkersdorfer, Thomas Schmidt, Cees van Leeuwen, & Thomas Lachmann: Modelling Hand Movement Trajectories in Rapid Chase

4) Vision on the Move: From Eye Movements to Visual Encoding (and back)

Organisers: Antonella Pomè and Alessandro Benedetto

- Alessandro Benedetto, Michele A. Cox, Jonathan D. Victor, & Michele Rucci: Oculomotor contribution to synchronization of cortical activity

- Nina M. Hanning, & Martin Rolfs : From monocular input to binocular action: How pre-saccadic motion shapes post-saccadic following

- Antonella Pomè; & Eckart Zimmermann: Feedback and feedforward contributions to perceptual stability during self-motion

- Cristina de la Malla, & Martina Poletti: Temporal dynamics of visual processing during the course of fixation

5) Probing the Visual System: Illusions as Windows into Typical and Atypical Cognition

Organisers: Erez Freud & Elisabeth Hein

- Erez Freud: Atypical Development Disrupts the Perception-Action Dissociation: Evidence from Visual Illusions

- Batsheva Hadad: Altered Magnitude Matching Between Sensory modalities in Autism

- Elisabeth Hein: Ambiguous apparent motion perception in 5–7-year-old neurotypical children

- Irene Sperandio: Stress and visual illusions: Is there a relationship?

6) Visuomotor transforms in prostheses, virtual reality, and teleoperation

Organiser: Emily Crowe

- Simon Watt & Molly Hewitt: : Grasping with altered hands: (why) are some sensorimotor transformations more intuitive than others?

- Claire Yuke Pi, Marco Gillies, Dorothy Cowie, & Xueni Pan: Reaching with an extended arm in virtual reality: experience and performance

- Loes van Dam & Celine Honekamp: Adapting to sensorimotor delays in continuous motor control tasks

- Emily Crowe, Gift Odoh, John Kiran, Raul Ghisa, Daniel Torres Ruiz, Harun Tugal, & Ayse Kucukyilmaz: Learning novel visuomotor mappings in remote control

- Peter Scarfe, Rea Gill, & Chloe Irwin : Sensorimotor transforms and teleoperation in the nuclear industry

7) Perception as Inference Across Scales — from natural scene statistics to neural computation and clinical translation

Organisers: Guido Maiello & Veronica Pisu

- Erich Graf, Wendy Adams, & James Elder: : SYNS: A Decade of Natural Scenes for Perception Science

- Charles Leek, Filipe Cristino, & Dietmar Heinke: The Integration of Shape Information Across Spatial Scales during the Visual Perception of 3D Object Geometry: Evidence from Deep Neural Networks, ERPs and Eye Tracking

- Arezoo Pooresmaeili, Nathan Huneke, & Guido Maiello: Reward Learning Reshapes Neural Representations of Visual Perception and Imagery

- Christoph Witzel: Colour Inference in the Wild: #theDress Ten Years On

- Veronica Pisu, Aleksandrs Koselevs, Sarah Cutler, Maria Patsiamanidi, Sam Khandhadia, Isabel Escofet, Jay Self, Andrew Lotery, & Guido Maiello : Continuous Psychophysics in the Clinic

8) Strategies for searching: better understanding visual foraging through the study of individual differences

Organisers: Anna E. Hughes & Jérôme Tagu

- Ian M. Thornton & Árni Kristjánsson: : Foraging tempo revisited: How does time pressure contribute to individual differences in target selection behaviour?

- Maha Haggouch, Karine Doré-Mazars, & Jérôme Tagu: How working memory shapes visual foraging behaviour: insights from individual differences

- Sarah K. Salo, Matthew E. Roser, & Alastair D. Smith: Immersive virtual reality for probing visual foraging across the lifespan

- Manjiri Bhat, Daniel Smith, Alexis Cheviet, Anna Hughes, & Alasdair Clarke: Visual foraging patterns as distinguishing features: A predictive model for PSP vs. Parkinson's Disease

9) Recent Advances in Face Perception and Identification: From Cells to Cognitive Mechanisms and Applied Identification

Organisers: Alejandro J. Estudillo & Christel Devue

- Bruno Rossion, Marie-Alphée Laurent, Jacques Jonas, Sophie Colnat-Coulbois, Laurent Koessler, & Corentin Jacques: : The cellular basis of the human face inversion effect

- Louise Ewing, Inês Mares, Rachel Bennetts, Sarah Bate, Nadja Althaus, Michael Papasavva, Emily K. Farran, & Marie L Smith: 2. A cross syndrome investigation of face selective processing abilities in Williams Syndrome and Down syndrome

- Anna Bobak: Predictors of lineup accuracy in 2D and 3D displays: An individual differences approach

- Christel Devue, Morgan Reedy, Gamze Ceylan and Mariame Bourard: Context-induced distinctiveness in face learning: Evidence across multiple facial appearance cues

- Alejandro J. Estudillo, Olivia Dark, Jan Wiener, Sarah Bate: 5. Navigating toward domain-specific accounts of face identification: evidence from individuals with developmental prosopagnosia and super-recognizers

10) Artificial Intelligence as a Window into Material Perception: From Minimal Models to Active and Individualized Representations

Organisers: Masataka Sawayama, Filipp Schmidt

- Arash Akbarinia, Alban Flachot, Matteo Toscani, & Raquel Gil-Rodriguez : : Spatiochromatic Representation in Discrete Autoencoders

- Anna Metzger & Matteo Toscani: Unravelling constancy mechanisms in haptic perception of materials using DNN modelling

- Takuma Morimoto, Arash Akbarinia, Katherine R. Storrs, Jacob R. Cheeseman, Hannah E. Smithson, Karl R. Gegenfurtner, & Roland W. Fleming: Tiny convolutional neural networks reveal computations underlying human gloss perception

- Masataka Sawayama: Personalized Modeling of Material Perception in Autism Spectrum Disorder

- Vincent Graham, Chenxi Liao, & Bei Xiao: Active Perception of Real-World Materials Using 3D Gaussian Splatting

Workshops

On Sunday, August 23th, a series of hands-on science skill workshops will be held on the Bournemouth University Talbot Campus (rooms to be decided). .

LIST OF WORKSHOPS:

Workshops from 9:00-17 (precise time to be decided)

1) VR Foundations for Researchers in Experimental Psychology

Organizers: Markus Bindemann, Rachael Taylor and Lisa Huerta (University of Kent)

Virtual Reality (VR) systems have advanced rapidly in recent years. They now allow researchers to immerse participants in complex and increasingly realistic three-dimensional environments, while preserving the experimental control familiar from the laboratory. For example, modern VR supports the precise measurement of behaviour that is necessary for a wide range of research in experimental psychology, such as button presses, eye tracking, gesture recognition, and spatial position tracking, while visual and auditory stimuli can be presented with precise timing.

These capabilities place VR in-between the two dominant approaches in experimental psychology – highly controlled laboratory experiments and field studies. Laboratory experiments rely on carefully selected visual material, usually presented in isolation without extraneous background or social context, while field studies can provide insights that hold greater ecological validity, but relinquish systematic control over the many variables at play. VR provides an intermediate alternative to these traditional approaches by enabling the systematic study of human behaviour from within the confines of the laboratory whilst also capturing the realism of more complex environments and social interactions.

Despite its growing profile, the use of VR in psychological research still lags behind its technical potential. Many VR studies simply replicate traditional lab paradigms and fail to exploit the complexity and realism current systems can provide. It is easy to understand why these shortcomings arise, as the implementation of VR requires some new skill sets and fundamental changes to the way in which experiments are typically conducted. Although VR has been available for some time, most researchers lack training in its use and the technology can seem daunting.

The goal of this workshop is to bring together researchers who wish to adopt VR but are unsure how to begin. We will demonstrate the construction of virtual environments with Unity – a free cross-platform engine for creating 3D projects in virtual reality. We will then demonstrate how this can be turned into experiments that can be projected into a VR headset and record responses. The workshop attendees will receive detailed materials of these activities so that they can continue learning and adopt these methods independently.

2) You should program your statistical models in Stan. It is easier than you think, and I’ll show you how.

Organizer: Alexander Pastukhov (Otto-Friedrich-Universität Bamberg)

In our research, we constantly rely on statistical models. Multiple R and Python packages provide models of various complexity from a basic t-test to generalized linear mixed models. However, they are limited in their design structure and flexibility of models that you can use. In this workshop, I will introduce an alternative approach of programming a statistical model from scratch using Stan probabilistic programming language. I will introduce syntax and walk you through an example of programming a basic two-group/condition comparison (a.k.a. a t-test), showing how it can be extended to allow for different distribution families, regularization. Next, I will show how Stan simplifies working with interactions using a two-way ANOVA. Finally, I will explain the underlying mechanisms of the MCMC engine, its limitations, how to diagnose the problems and ways to side-step them.

This workshop is aimed at a broad audience and requires basic knowledge of statistics and programming (either R or Python). Its aim is to introduce a flexible method that allows to program virtually any statistical model that you are interested in.

Prerequisite Knowledge:

- Basic knowledge of R or Python

- Basic understanding of statistics

Prerequisite Materials:

- R + RStudio + cmdstanr package. Your choice of packages for data wrangling and visualization, e.g., Tidyverse.

- or

- Python + cmdstanpy package. Your choice of packages for data wrangling and visualization, e.g., pandas + numpy + matplotlib/seaborn.

3) Reproducible research reports with Quarto

Organizers: Eline Van Geert & Lisa Koßmann – KU Leuven, Belgium

Are you conducting research that includes analyses performed in Python or R? Tired of manually copy-pasting the results from your analysis software to word processors like Word or LaTeX? Learn how to enhance the reproducibility of your work by creating reproducible research reports with Quarto. With Quarto, you directly combine text, code, and code outputs into custom formatted documents (including PDF/Word/HTML reports), articles, presentations, books, or websites. You can even add interactive elements to your documents to help readers explore your results.

In this workshop, we introduce participants to this versatile open-source scientific and technical publishing system. We discuss the basic components of creating a Quarto document, and demonstrate how to render it to different output formats. We also point you to additional resources for more complex uses of Quarto. If possible, please have one of the following editors installed before the workshop: Jupyter, Visual Studio Code, or RStudio (cf. https://quarto.org/docs/get-started/).

4) Advanced stimuli presentation using Tobii Pro Lab and/or other third-party software

Organizer: Tobi

In this Tobii workshop, we will go through the process of setting up advanced stimulus presentations using different software options tailored to the task. Using the tools we have, we will define some example eye tracking setups that can be done using our own advanced screen project and others using tools like our SDK, the Titta toolbox, and Psychopy..

5) Hands-On Multimodal Eye Tracking and EEG Setups with Tobii and Brain Products

Organizer: Tobi

In this workshop Tobii has partnered with Brain Products to present a comprehensive, step-by-step guide on setting up multimodal systems. We will cover how to design an experiment with EEG in mind, configure synchronization options using Pro Lab and the USB to TTL box from Brain Products, and record an experiment using this setup. Additionally, we will provide a complete analysis pipeline for the data produced.

NOTE: More workshops will be posted soon, including one organised by Cambridge Research Systems and one by Testable.